Site architecture is critical when it comes to search engine optimization (SEO). It’s one of those things that you want to get right the first time.

However, occasionally you receive new insights after you thought you had it all figured out. Alternatively, you may find yourself working on a website that requires structural assistance as a result of decisions made by a prior team.

Whether your site needs a few tweaks or you’re planning a complete redesign, it’s worthwhile to examine online information architecture.

In this essay, we will:

- What exactly is site architecture?

- SEO-friendly site architecture best practices

- Changing the architecture of your website

- Redesigning your website’s architecture

- But wait, you’re not finished yet!

What exactly is site architecture?

The structure that organizes and delivers the material on your website is referred to as site architecture. It comprises the page hierarchy where consumers locate content as well as the technical considerations that allow search engine bots to explore your sites.

A good website information architecture ensures that people and search engine crawlers can effortlessly navigate your site. It answers the question, “What do I do here?” and “What should I do next?”

Site architecture that has been optimized for SEO is appealing and efficient for bots to crawl. This assists them in finding and displaying your content in search engine results pages (SERPs).

Best SEO-Friendly Site Architecture Practices

Google allows a crawl budget to each webpage. This defines how many pages on your site will be crawled by a bot. You want to ensure that they crawl, comprehend, and index your most critical pages – as many as possible. SEO architecture best practices pave the path for this.

Here are four best practices for web design:

1. Use a URL structure that is SEO-friendly

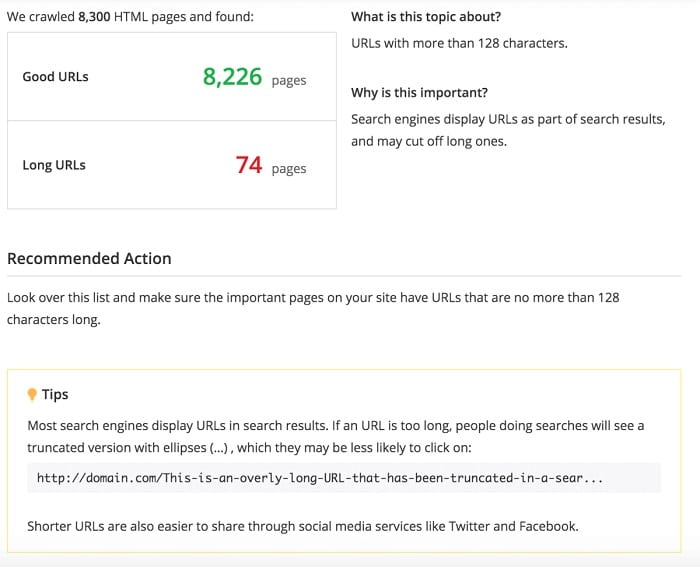

Google prefers short URLs. Make sure your team remembers the following points whenever they produce a new page or blog post:

- Lowercase words containing alphanumeric characters should be used.

- Words are joined together with hyphens.

- Keep URLs brief. (128 characters or less is an excellent starting point.)

- Make URLs that are appealing to people. Use descriptive keywords and, if applicable, incorporate your main search query for the page. (Learn more about keywords in URLs.)

- For content categories and subcategories, use logical folders.

- Session IDs should not be used in URLs.

To rapidly discover URLs that are excessively long, run a site audit with Alexa’s Site Audit Tool. It will also detect broken links, which are bad for SEO, as well as other technical site issues that are harming your site’s SEO architecture.

Know more about : Page Title SEO, Keyword Density, Technical SEO, Google Penalties

2. Make use of a sitemap

Bots appreciate it when you make it simple for them to access your material. A sitemap is one method for accomplishing this. In reality, Google recommends using two types for efficient crawling: XML sitemaps and RSS/Atom feeds. These direct bots to all of the pages you want to be crawled, as well as any recent changes you’ve made to the pages. Google even takes the time to show you how to create sitemaps.

3. Consider how pages connect to and from one another

Take note of the anchor text. These are the linked words in the text. The words themselves transmit a vital signal about the material on the other end of the link, both to machines and humans.

Furthermore, the location from where sites are linked can suggest the prominence of those pages. Pages linked sitewide, such as those in your navigation, are seen as the most significant by search engines because they are only a click away from any page on your site.

Every page should be accessible in under five clicks. The Reachability part of the Alexa Site Audit tool indicates how many clicks it takes our crawler to find pages, providing you with a list of unreachable pages.

4. Provide a Safe and User-Friendly Experience

Search engines are concerned that the sites they display deliver a positive user experience. As a result, they reward people who pay attention to:

- Speed: Wherever possible, reduce the time it takes for a page to load.

- Mobile-friendliness: Ensure that your site renders properly on various screen sizes.

- Security: Make sure your website employs the SSL protocol.

- Clear navigation: Avoid sites with an excessive number of links.

Now that we’ve established some ground rules, let’s take a look at how to go about altering or redesigning your website architecture.

Changing the Site Architecture of website

When you anticipate seeing a significant improvement in performance with only a few fundamental modifications, these typical problems are a smart place to start.

1. Increase efficiency and eliminate crawl budget waste

Set up a sitemap if you don’t already have one to help bots find your pages more easily. A robots.txt file can also be used to tell search engines which pages or sections of your site to ignore. Before crawling your site, search engines will look for this. Make sure you don’t have too many redirects set up, as this reduces bot efficiency.

2. Deal with pages that appear to have duplicate material

When both a www version and a non-www version of a site are indexed, this is a common cause of duplicate content. To Google, this appears to be two distinct websites. The same thing can happen with the HTTP and HTTPS versions. You must ensure that the various versions of your website resolve to the same one. A 301 redirect in yours. htaccess file will solve the problem.

Other typical concerns can be found in our duplicate content guide, and you can then utilize Alexa’s Advanced plan to search for duplicate material on your website.

3. Investigate the technical Site Architecture elements

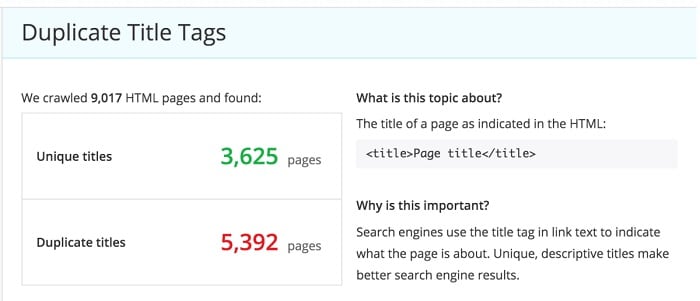

Look into technological concerns such as server errors. Check for and optimize the presence of unique meta tags.

How do you find problems like this to improve? Alexa’s Site Audit tool will examine your site and tell you what needs to be fixed, as well as provide helpful ideas and recommendations. Here’s an example of the Site Audit tool evaluating a site for duplicate title tags.

Redesigning Your Site Architecture

If your website has information architecture concerns, or if you’re constructing a new website, you’ll need to plan ahead of time. Follow these steps to determine how to organize the new website structure.

Step 1: Create user stories and personas

First, determine what users will need to be able to do on the website. Revisit your content strategy to better understand who you’re trying to reach and how you’ll reach them. Personas are also useful, and sketching out your customers’ journeys will provide a clear image of each form of interaction that visitors will have with your site.

Step 2: Use keyword data to assist you to define the structure of your content

Keyword research can provide you with invaluable insights into your users’ information requirements. It helps to immerse you in their environment, which can be difficult for employees who have grown accustomed to their own internal language. You’ll come across terms you hadn’t considered before, and you’ll gain fresh thoughts you hadn’t considered before.

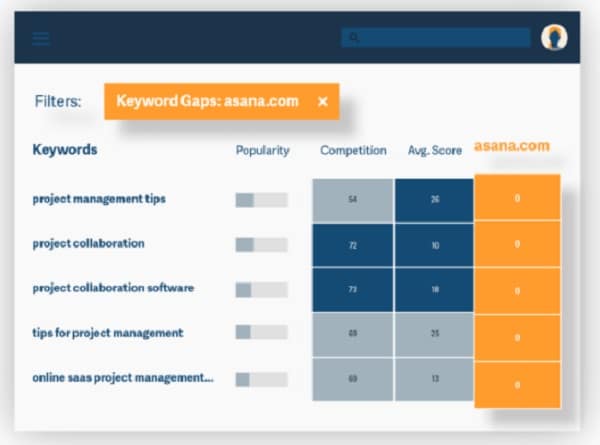

Alexa can help you with keyword research! This might help you determine what themes your competitors are addressing well and where you can introduce your unique perspective.

Step 3: Plan out the pages you’ll need

You should first do a content assessment on an existing website. Then, whether you’re starting from scratch or rebuilding an existing site, a sitemap or wireframe can assist you in planning content arrangement.

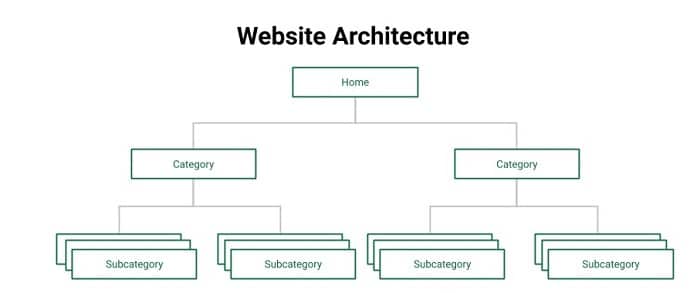

Consider how pages relate to one another as you create a folder structure for your website’s offerings and information. Use logical categories that progress from the largest to the smallest groupings (broad to narrow focus).

GoDaddy categorizes its information according to the product line. All content related to its phone number app can be found in the SmartLine folder:

Fundera curates information items in a blog, taking care to keep URLs as short as possible:

https://www.fundera.com/blog/loan-for-taxi-business

Put your most critical pages in the root directory, which is the directory that comes right after the domain name. Proximity to the root is a tactic employed in SEO to lend power to search-optimized landing pages. (Use this with caution, as having too many pages in the root directory dilutes the impact.)

In the root directory, there is a landing page:

website.com/seo-landing-page/

… as opposed to a landing page in a more distant directory:

website.com/category/subcategory/seo-landing-page/

You’ll want to think about navigation when you develop the layout of your pages: How will visitors get from one part of the site to another? Which pages will be linked from the main menu? Will you make use of breadcrumbs? The ease of navigation to a page is more significant to Google than the folder structure.

Don’t forget to include company objectives. This entails taking into account the path to acquiring leads and/or sales in your SEO architecture. You must also consider for expansion into new markets, whether that means new industries and goods or geographical regions, so that your website’s content structure may expand in tandem with your company’s growth.

Step 4: Perform user testing

It is critical to collect feedback at various phases. Colleagues should be consulted at the wireframe stage. When you have a prototype or an early version of your site, conduct usability testing: assign tasks to representative users and collect their feedback. Getting feedback early on can either confirm you’re on the correct road or show out where you’ve gone wrong before you invest too much time and money in rebuilding the website.

You’re all ready to develop your new content structures with SEO architecture in mind now that you’ve laid a solid foundation.

You’re not finished yet!

You’ll need to maintain your website’s ideal site architecture once you’ve installed it. Websites have a habit of evolving as they develop – pages are produced in the incorrect subfolders, duplicate copies can appear without anybody noticing, and so on. What’s the greatest approach to remain on top of things? Set up an automated report to notify you when new concerns arise.

You May also like sitescorechecker , Pro Site Ranker